How AI and Software Testing Are Shaping the Future of Digital Enterprises

Quick Summary:

In this blog, we explore how AI in software testing, often called AI testing, is transforming digital enterprises. We look at how AI and software testing are converging, analyze practical uses like self-healing and predictive test selection, discuss challenges and strategies, and project the future. We also examine AI in testing trends and how service providers like ImpactQA can assist firms in this shift.

Table of Contents:

- The Rise of AI in Quality Assurance

- What Is AI Testing & Why It Matters

- Practical Applications of AI in Software Testing

- Challenges, Risks, and Mitigation Strategies

- Future Trends: AI and Software Testing in the Next Decade

- How Enterprises Must Adapt to Leverage AI in Testing

- Conclusion

In the digital era, enterprises are under pressure to ship high-quality software rapidly. Traditional manual and rule-based test methods struggle to scale in speed or to adapt to change. Against this backdrop, AI for software testing is gaining traction as intelligent techniques allow test suites to evolve, prioritize, and even repair themselves.

At its heart, the notion of AI in testing is not about replacing human testers but about augmenting them. It enables teams to sift through vast amounts of telemetry, trace dependencies, and respond to shifts in application behavior. As digital businesses scale, software quality becomes a competitive differentiator. Thus, the convergence of AI and software testing is now a strategic move for future-ready organizations.

What Is AI Testing & Why It Matters

In software quality assurance, AI testing refers to the use of artificial intelligence techniques (such as machine learning, predictive analytics, and natural language processing) to improve or automate parts of the testing lifecycle. When we say AI in software testing, we emphasize embedding AI capabilities into test design, execution, maintenance, and reporting. Meanwhile, AI in testing is a slightly broader term, encompassing AI in test planning, defect prediction, test environment generation, and more.

Why is it important? Traditional test automation often follows rigid scripts. When the UI changes slightly, locators break; when new features arrive, test scripts must be updated. This fragility leads to high maintenance effort. With software testing with AI, the system can learn patterns, predict breakages, and adapt. For instance, AI models may analyze historical test results and code changes to decide which tests are relevant for a given build (test impact analysis).

Moreover, AI for software testing enables predictive defect detection, spotting parts of the codebase likely to fail, and test data generation that covers corner cases intelligently rather than by brute force. Some more advanced implementations even employ generative AI in software testing like automated generation of test cases, assertions or test logic from requirements or past data.

In practice, AI can assist in:

- Self-healing Tests: Adjusting locators or wait logic when UI changes

- Test Prioritization: Selecting which subset of tests to run in a pipeline

- Defect Prediction: Forecasting modules likely to contain bugs

- Test Generation: Deriving new test scripts from usage logs or specifications

Learn how ImpactQA delivers tailored solutions for your business needs.

Practical Applications of AI in Software Testing

Enterprises are rapidly adopting intelligent methods to build resilient and adaptive quality assurance pipelines. The following use cases show how AI testing, AI in testing, and AI in software test automation are shaping the digital shift:

1. Self-Healing UI and Locators

One of the most visible uses is self-healing test scripts. When a UI element’s property changes, AI testing identifies a new selector, adjusts the logic, and repairs the test automatically. This reduces the burden of maintaining brittle suites.

2. Test Impact Analysis and Intelligent Selection

Instead of executing every test after each code change, AI in testing can determine which tests are most relevant by analyzing dependencies and coverage. This saves time and resources while ensuring quality.

3. Automated Test Case Generation

With generative AI in software testing, test cases and assertions can be derived from requirement artifacts, user stories, or even production usage data. This helps uncover additional scenarios, including edge and negative cases.

4. Predictive Defect Detection

By analyzing historical defects, code churn, and complexity metrics, AI in software testing can forecast modules that are more susceptible to errors. Teams can then prioritize testing efforts on higher-risk areas.

5. API and Integration Test Automation

In microservice and distributed environments, AI in software test automation can design API-level test suites from traffic logs or specifications, strengthening coverage across critical integrations.

6. Visual Validation and Regression Detection

Leveraging vision-based techniques, software testing with AI can distinguish significant UI regressions from minor changes. This minimizes false alarms and helps teams focus on impactful issues.

7. Low-Code and Natural Language Testing

Using AI in testing, teams can create automation with natural language prompts or simplified workflows. This widens accessibility and allows non-technical users to contribute to QA.

These applications demonstrate how AI and software testing converge to create adaptive, intelligent pipelines that remain reliable even as digital enterprises scale and evolve.

Challenges, Risks, and Mitigation Strategies

While AI in software testing brings many advantages, there are nontrivial challenges. Understanding them and preparing for them is critical for adoption success.

1. Data Availability & Quality

AI and ML models require quality, labeled training data. Many teams lack clean historical test logs, bug-label mappings, or telemetry data. Poor or biased data may lead to flawed predictions or misguided outcomes.

Mitigation: Begin collecting consistent logs, versioned test results, bug metadata and code metrics early. Use synthetic data augmentation and human validation loops to bootstrap models.

2. Model Transparency & Explainability

AI decisions (e.g., why a test was skipped or repaired) can be opaque. Without interpretable feedback, testers may distrust the system.

Mitigation: Use explainable AI methods (feature importance, attention maps). Make repair proposals visible and allow human override. Track audit logs for changes.

3. Overfitting & Drift

Models trained on one codebase or period may fail when project structure or patterns shift. This leads to model drift, degraded performance, or false negatives.

Mitigation: Retrain periodically, monitor performance metrics (false negative/positive rates), and incorporate online learning or adaptation strategies. Use human feedback loops.

4. False Repairs or Broken Fixes

In self-healing or test repair, an AI may propose a flawed fix, leading to undetected defects or false positives.

Mitigation: Always include manual review phases before merging suggested repairs. Use version control branching to isolate AI-generated changes, revert if needed.

5. Security, Privacy & Compliance Risks

When dealing with regulated domains (finance, healthcare), generative AI or test automation may violate compliance or leak data.

Mitigation: Enforce policies that block AI use on sensitive modules until approved. Mask or sanitize data before feeding into AI. Maintain traceability of test decisions.

6. Integration and Tooling Barriers

Legacy systems, test frameworks, or CI pipelines may resist AI tool integration. Teams may resist adopting new workflows.

Mitigation: Adopt incremental adoption strategies (pilot in new modules). Use adapters or wrappers around existing test infrastructure. Train teams gradually.

7. Resource and Compute Costs

Running AI models, especially generative or vision models, requires compute, memory, GPU, etc. The costs may outweigh benefits initially.

Mitigation: Use lightweight models, threshold-based activation (run AI only when needed), cloud resources with autoscaling, or hybrid on-demand execution.

Future Trends: AI and Software Testing in the Next Decade

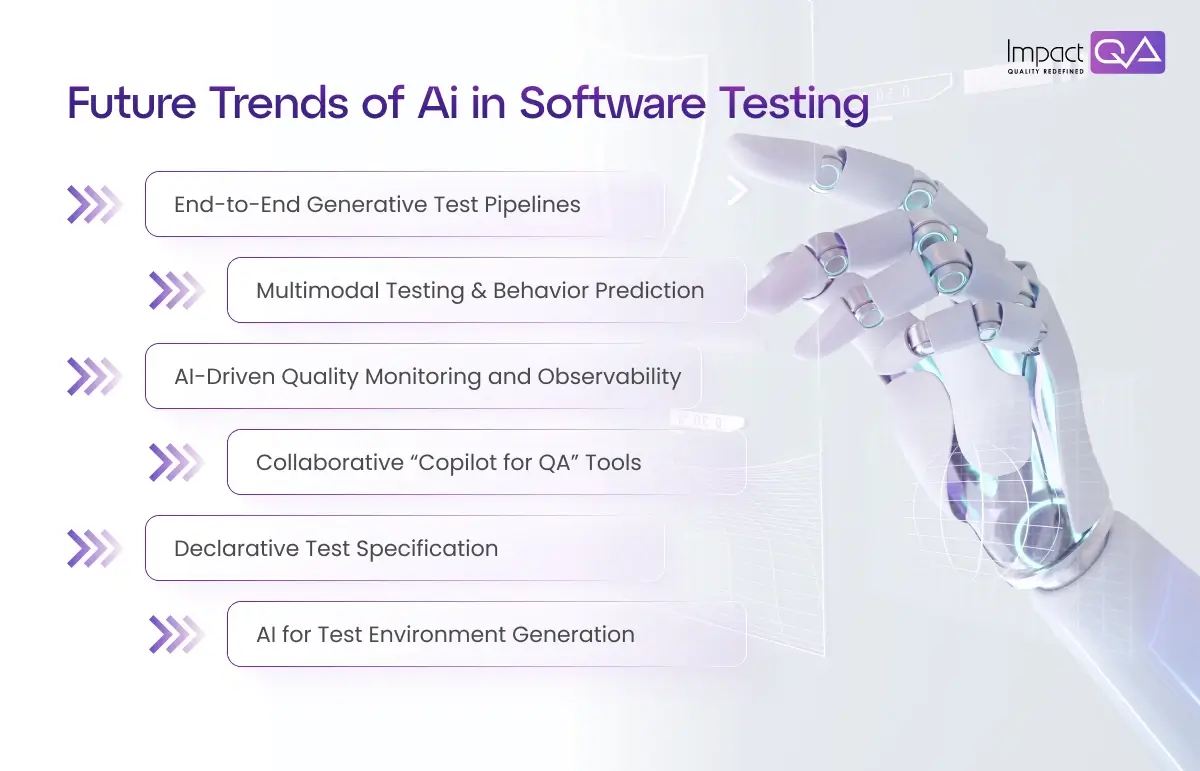

Looking ahead, several trends are emerging in how AI in software testing will evolve and reshape digital engineering. The below mentioned trends point to a future where AI in testing is deeply embedded, continuously adaptive, and spans the full engineering stack.

1. End-to-End Generative Test Pipelines

Rather than piecemeal assistance, future systems will ingest requirements, commit diffs, and autonomously produce, validate, and evolve full test suites with minimal human intervention. This is the domain of generative AI in software testing combined with automated verification loops. Advances in LLMs plus retrieval-augmented frameworks point toward this direction.

2. Multimodal Testing & Behavior Prediction

Future systems may ingest logs, UI screenshots, system metrics, and user feedback to anticipate failures or performance regressions. This blends vision, time-series and NLP modalities into predictive QA.

3. AI-Driven Quality Monitoring and Observability

Test automation will blur into observability, where production traces, error logs, and real user metrics feed into the same AI models that drive test decisions. This shift is creating self-validating systems.

4. Collaborative “Copilot for QA” Tools

Just like code copilots, QA copilots will suggest tests, highlight suspicious modules, and help even junior testers be highly effective. They will continuously consult models for guidance. This is part of the AI testing ecosystem.

5. Declarative Test Specification

Rather than writing scripts, users will describe intent (“verify the new discount logic under various promos”) and AI will generate tests. This declarative approach will lower the barrier for non-engineers.

6. AI for Test Environment Generation

Creating mock services, dependent systems, or synthetic data is laborious. AI will synthesize realistic virtual services or test data from traffic logs, contracts, or behaviors. This will automate test environment setup.

How Enterprises Must Adapt to Leverage AI in Testing

To make this shift in practice, enterprises need more than tools; they must evolve mindsets, processes, and capabilities.

1. Build the Foundation with Data Hygiene

Begin by enforcing strict logging, traceability, version control, and metadata collection. Capture test outcomes, code metrics, change history, and defect mapping. Without clean data, AI cannot deliver reliably.

2. Start Small, Iterate, and Expand

Adopt AI in limited areas first, such as self-healing UI, test prioritization, or predictive defect ranking. Monitor results, refine, and only then expand to full generative pipelines. This lowers risk and builds confidence.

3. Blend Human and AI Decision Boundaries

Define clear boundaries. AI handles volume, consistency, detection; humans handle intent, edge cases, oversight. Provide review gates for AI-generated repairs or tests.

4. Upskill QA Teams

QA personnel need training in AI models, metrics, feature engineering, and interpreting outputs. QA professionals should become “test architects” who supervise AI systems rather than just execute scripts.

5. Choose Flexible Tooling & Architecture

Prefer test tools and frameworks that offer open APIs or plugin models so AI modules can integrate or be swapped. Avoid black-box systems that don’t allow adaptation.

6. Monitor and Govern AI Behavior

Continuously track accuracy, drift, false positives, and time savings. Create dashboards, alerts, and audit trails. In regulated settings, maintain logs for compliance.

7. Iterate in Production

Leverage canary deployments or shadow testing to validate AI-generated test updates before fully enabling them. This helps catch misbehaving automation before damage.

See how ImpactQA helps enterprises build resilient, intelligent pipelines.

Conclusion

In the evolving digital enterprise, combining AI in software testing with intelligent automation is propelling QA from a passive gate to an active engine. Deep learning, predictive models, and generative techniques are gradually enabling autonomous test pipelines, but only when combined with human judgment, robust governance, and ongoing adaptation. In that transition, providers such as ImpactQA play a crucial role, offering tailored AI-ML testing services that cover self-healing scripts, predictive analytics, cognitive feature validation, and more.

ImpactQA aligns with the future by embedding AI in testing across the SDLC, integrating with clients’ pipelines, and offering feedback loops to continuously improve model performance. Our expertise ensures clients don’t just adopt tools but build resilient systems that evolve. As digital enterprises propel forward, AI-powered quality assurance guided by expert partners will determine who delivers software reliably, quickly, and with consistency.