How Enterprises Can Measure Real Business Value and ROI from AI Testing in 2026

Quick Summary:

Measuring the return on investment from AI-led testing is no longer optional for enterprises aiming to control risk, cost, and release velocity. This blog explains how to quantify ROI from AI testing using business-aligned metrics, structured frameworks, and engineering insights. It also explores practical strategies, challenges, and governance considerations required to translate AI in software testing into measurable business value in 2026.

Table of Contents:

- Introduction

- Reframing ROI Measurement for AI-Driven Testing

- Key Value Drivers of AI Testing in Enterprise Environments

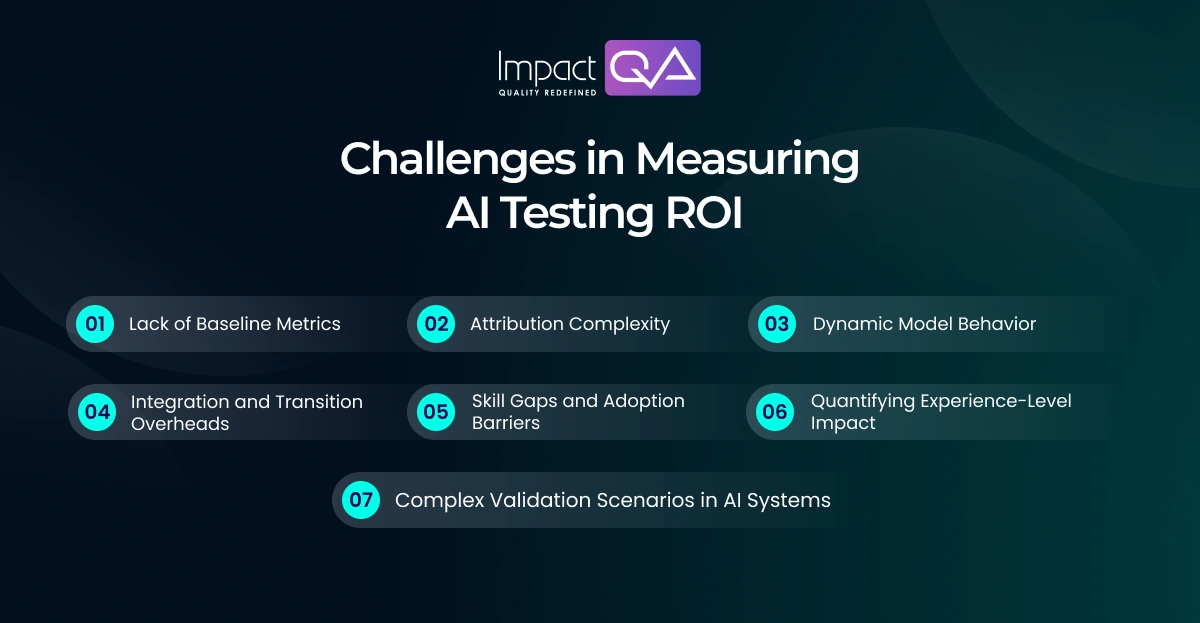

- Challenges in Measuring AI Testing ROI

- Frameworks and Metrics to Quantify Business Impact

- Best Practices and Strategic Implementation Approaches

- Final Thoughts

Enterprises are investing heavily in AI testing capabilities, yet many still struggle to connect these initiatives with measurable business outcomes. While automation has long been associated with efficiency gains, AI in software testing introduces a different dimension. It brings intelligence-driven validation that adapts, predicts, and optimizes testing workflows. This shift demands a more outcome-oriented approach to measuring ROI.

According to a recent report by Gartner, over 60 percent of organizations are expected to integrate AI into their testing strategies by 2026, yet less than half have defined clear ROI measurement models. This gap highlights a growing need to align AI testing initiatives with business performance indicators such as release predictability, system resilience, and customer experience.

At the same time, the adoption of advanced AI testing solutions and specialized AI testing services is accelerating across industries. Leadership teams now expect clear evidence of value in terms of reduced business risk, improved customer experience, and faster innovation cycles. This makes it essential to define how AI testing contributes to real enterprise outcomes rather than isolated testing metrics.

ImpactQA delivers intelligent validation frameworks to quantify ROI with precision.

Reframing ROI Measurement for AI-Driven Testing

Traditional ROI models in testing focused on effort reduction, automation coverage, and defect leakage. While these remain relevant, they do not fully capture the value delivered by AI testing. Enterprises must expand measurement models to include system stability, release confidence, and business continuity.

AI in software testing introduces predictive validation. Instead of relying only on execution outcomes, it evaluates historical data, code changes, and usage patterns to prioritize testing efforts. This reduces exposure to high-impact failures and improves decision-making across release cycles.

A structured evaluation should focus on the following dimensions:

- Operational Efficiency Gains: AI-driven orchestration minimizes redundant execution paths and optimizes test suite utilization, reducing compute overhead and improving pipeline throughput.

- Risk Reduction and Defect Prevention: Pattern-based defect intelligence improves early identification of high-severity failure scenarios, lowering production defect density and incident frequency.

- Faster Time to Market: Dynamic prioritization models reduce regression cycle duration, enabling faster and more stable release rollouts.

- User Experience Consistency: Context-aware validation ensures application behavior remains stable across real-world usage conditions, improving interaction reliability.

Emerging areas such as LLM testing and chatbot testing further extend ROI by validating response accuracy, contextual alignment, and conversational stability in AI-driven interfaces. A robust ROI model, therefore, integrates predictive accuracy, system reliability, and business continuity metrics into a unified evaluation framework.

Key Value Drivers of AI Testing in Enterprise Environments

AI testing transforms validation from a support function into a decision-enabling layer within engineering. Its impact is most visible in how testing adapts to system changes while maintaining execution continuity and insight generation.

Another critical shift comes from embedding intelligence within execution layers. Instead of static validation cycles, AI-driven systems continuously refine test relevance based on code evolution, usage patterns, and historical outcomes.

- Intelligent Test Coverage Expansion: AI models analyze code changes, usage telemetry, and historical defect clusters to identify untested execution paths and generate high-impact test scenarios.

- Self-Healing Test Execution: Element recognition models dynamically update locators and interaction paths, reducing script breakage caused by UI or API changes.

- Data Driven Quality Intelligence: Testing outputs are correlated with defect density trends, failure patterns, and environment variables to produce actionable quality insights.

- Continuous Validation Across Pipelines: Event-triggered execution ensures validation is aligned with code commits, configuration changes, and deployment events. This helps reduce dependency on scheduled cycles.

- Validation for Distributed and Agentic Systems: AI testing frameworks extend coverage to decentralized architectures to ensure consistency across autonomous components and service interactions.

Challenges in Measuring AI Testing ROI

Measuring ROI from AI testing requires more than tracking execution efficiency or automation coverage. The challenge lies in connecting intelligent testing outcomes with real business performance indicators.

At the same time, enterprises adopting AI testing solutions and AI testing services encounter structural and operational gaps that make ROI calculation less direct.

- Lack of Baseline Metrics: Absence of pre-implementation benchmarks such as defect density, execution latency, and failure rates limits the ability to quantify improvement accurately.

- Attribution Complexity: Concurrent changes in development, infrastructure, and testing layers make it difficult to isolate the direct impact of AI in software testing.

- Dynamic Model Behavior: Continuous model retraining introduces variability in outcomes, requiring adaptive benchmarking strategies rather than fixed measurement baselines.

- Integration and Transition Overheads: Initial efforts required for integrating AI testing into CI CD pipelines and toolchains can delay visible returns.

- Skill Gaps and Adoption Barriers: Limited expertise in model interpretation and AI-driven validation workflows reduces effective utilization of AI testing solutions.

- Quantifying Experience-Level Impact: Metrics such as user trust, interaction quality, and perceived performance are influenced by testing but are not directly measurable through traditional KPIs.

- Complex Validation Scenarios in AI Systems: Use cases involving LLM testing and chatbot testing require evaluation of semantic accuracy, contextual continuity, and response variability, which demand new measurement models.

Frameworks and Metrics to Quantify Business Impact

To measure ROI effectively, enterprises need structured frameworks that align AI testing outcomes with business objectives through quantifiable indicators.

- Prediction Accuracy Rate: Evaluates how precisely AI models identify high-risk components by comparing predicted failure zones against actual defect occurrence.

- Defect Containment Efficiency: Measures the ratio of defects detected in pre-production environments versus those escaping into production systems.

- Test Maintenance Reduction Index: Quantifies the decrease in manual script updates due to adaptive and self-healing capabilities within AI testing solutions.

- Execution Optimization Ratio: Assesses reduction in redundant test executions through intelligent prioritization and dynamic test selection.

- Release Stability Index: Tracks post-release system performance by correlating deployment frequency with incident rates and rollback occurrences.

- User Experience Stability Metrics: Includes response consistency, latency variance, and transaction success rates under real-world usage scenarios.

Benchmarking these metrics against traditional testing approaches provides a clear view of incremental value delivered by AI testing services.

Best Practices and Strategic Implementation Approaches

Maximizing ROI from AI testing requires aligning implementation with engineering priorities and business objectives. The focus should remain on targeted adoption, measurable outcomes, and continuous optimization.

A high-impact strategy begins with identifying validation areas that involve complex workflows, high transaction volumes, or critical business dependencies. These areas offer faster and more visible ROI when enhanced through AI-driven testing.

- Define Outcome Driven KPIs: Establish measurable indicators such as defect containment rate, execution efficiency, and release stability before deploying AI testing services.

- Prioritize High-Complexity Test Scenarios: Apply AI models to systems with dynamic workflows, frequent changes, or high business impact to maximize value realization.

- Ensure High Quality Training Data: Maintain structured, diverse, and clean datasets to improve model accuracy and reliability in AI testing solutions.

- Adopt Incremental Deployment Models: Introduce AI capabilities in controlled phases to validate performance and minimize operational disruption.

- Integrate with CI CD Ecosystems: Embed AI testing within deployment pipelines to enable continuous validation aligned with development cycles.

ImpactQA provides advanced AI testing solutions to improve quality and reduce risk.

Final Thoughts

Measuring ROI from AI testing requires a shift toward integrated evaluation models that connect testing outcomes with engineering performance and business stability. Enterprises must move beyond isolated metrics and adopt frameworks that capture predictive accuracy, defect containment, and release reliability.

As AI in software testing continues to evolve, organizations that establish structured measurement models and align them with business priorities will gain a measurable advantage. They will improve not only software quality but also operational predictability and customer experience.

At ImpactQA, we focus on translating AI testing into measurable business outcomes. Our AI testing services combine intelligent validation frameworks, domain expertise, and advanced automation to deliver consistent and scalable results. We work closely with enterprises to define ROI metrics, implement AI testing solutions, and ensure that testing strategies directly support business performance.