Gen AI in Healthcare: Key Lessons for QA Professionals in 2025

Quick Summary:

Generative AI is revolutionizing healthcare by streamlining diagnosis, enhancing clinical workflows, and facilitating personalized treatment recommendations. While this progress is promising, safe rollouts depend on rigorous QA practices that address risks in compliance, patient safety, and software performance. This article unveils Gen AI trends in healthcare for 2025, highlights the use of Gen AI in healthcare applications, and distills practical insights for QA professionals responsible for ensuring trustworthy deployments.

Table of Contents:

- Introduction

- Gen AI Trends in Healthcare 2025

- Where QA Fits in Gen AI in Healthcare

- Key Risks and Vulnerabilities QA Must Address

- Testing Strategies for Safe Gen AI Rollouts

- Best Practices for the Use of Gen AI in Healthcare Applications

- Conclusion

Healthcare is undergoing a bourgeoning transformation with the arrival of generative AI. From diagnostics to drug discovery, AI is influencing both patient outcomes and provider efficiency. A recent report projects the global healthcare AI market to reach USD 208.2 billion by 2030. Such rapid adoption raises an essential question – how can QA professionals ensure safe and reliable rollouts while clinical operations become increasingly dependent on algorithms?

The answer lies in aligning software quality practices with the speed and complexity of Gen AI in healthcare. What makes this more pressing is that even small inaccuracies in AI-driven healthcare applications can lead to significant clinical or compliance setbacks. QA must evolve to validate outputs that are probabilistic rather than deterministic, demanding new frameworks of testing. More importantly, professionals need to anticipate risks before deployment to ensure that every AI model not only scales but also safeguards patient trust and safety.

ImpactQA specializes in HIPAA-ready, AI-driven software validation.

Gen AI Trends in Healthcare 2025

Healthcare organizations are experimenting with AI across diagnosis, administrative workflows, and decision support. However, Gen AI trends in healthcare 2025 reflect a deeper integration of large language models and predictive algorithms.

Key directions include:

- AI-driven Diagnosis Support: LLM-powered tools generate clinical notes, draft treatment recommendations, and analyze medical imaging with reduced error rates.

- Drug Discovery Acceleration: Generative models simulate molecular structures, cutting early-phase development cycles.

- Patient Engagement Platforms: Conversational AI provides round-the-clock guidance, appointment scheduling, and pre-screening assessments.

- Administrative Automation: Billing, insurance claims, and coding tasks are increasingly augmented by AI-driven documentation.

These advancements emphasize the use of Gen AI in healthcare beyond frontline treatment. Yet, each new function introduces new risks – from false positives in diagnostics to susceptibility to data bias. This makes the QA role central to safe rollouts.

Where QA Fits in Gen AI in Healthcare

Quality Assurance cannot function as a post-deployment check in AI-driven healthcare systems. Instead, QA must integrate throughout the lifecycle of Gen AI in healthcare. Testing must begin at the data preparation stage and continue through model training, integration, and deployment monitoring. This shift makes QA an ongoing partner in development rather than a final gatekeeper.

Three important lessons for QA professionals:

- Continuous Validation: Unlike static applications, generative models keep learning. QA must verify outputs regularly against evolving datasets and retrain validation cases as new medical data emerges.

- Bias Auditing: Patient data often reflects demographic imbalances. QA must identify where algorithms produce skewed results and ensure equitable outcomes across gender, age, and ethnicity.

- Compliance Mapping: Healthcare applications are tightly bound by HIPAA, GDPR, and regional clinical guidelines. Testing needs to confirm adherence while also validating explainability features that regulators increasingly demand.

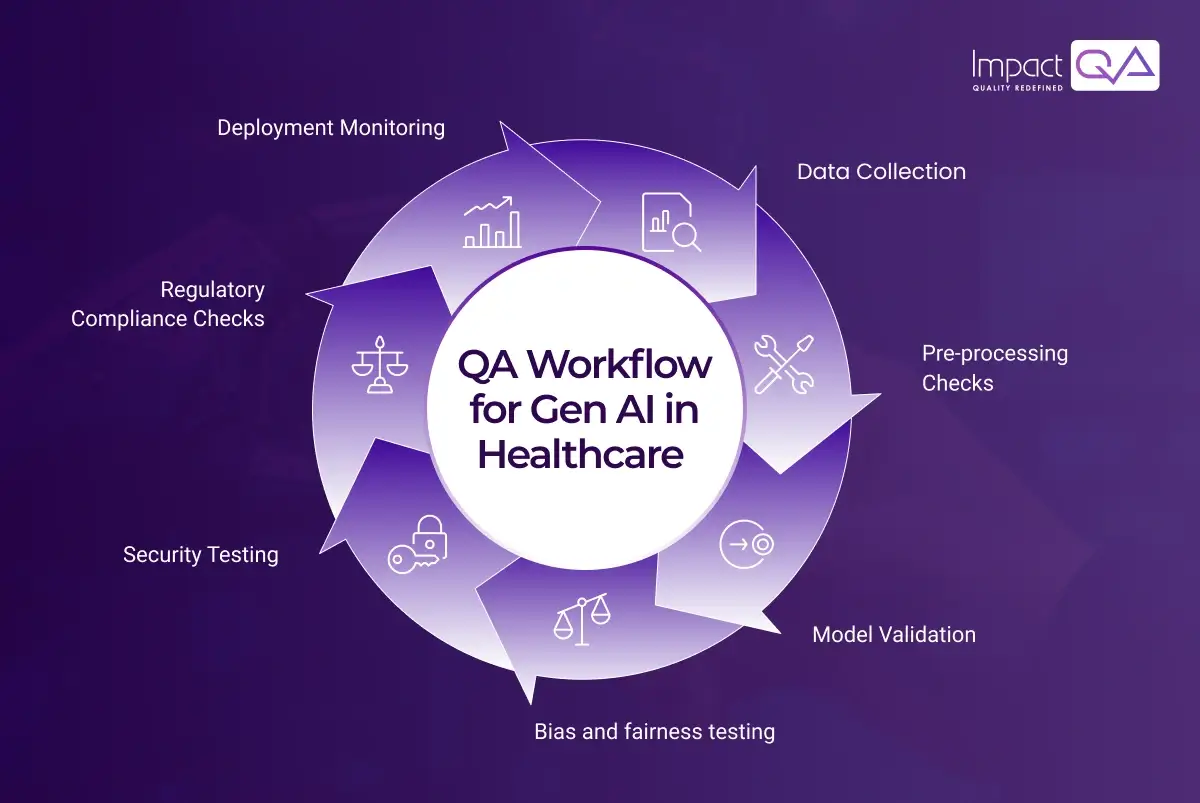

QA Workflow for Gen AI in Healthcare

Below is a structured representation of how QA integrates into AI-powered healthcare rollouts. This structured workflow helps QA professionals manage complexity across Gen AI in healthcare deployments.

In practice:

- Input validation → Raw patient data cleaning and verification.

- Model audit → Testing performance against clinical standards.

- Bias detection → Identifying demographic or disease-specific blind spots.

- Security QA → Data encryption, secure APIs, and access control.

- Post-deployment QA → Regular monitoring for drift and compliance updates.

Key Risks and Vulnerabilities QA Must Address

The rapid adoption of generative AI exposes healthcare systems to vulnerabilities that QA professionals must actively counter. Some of these risks are:

- Incorrect Recommendations: Diagnostic errors due to unverified model outputs.

- Data Privacy Violations: Sensitive patient data being mishandled during AI training.

- Model Drift: Performance deterioration as real-world data shifts.

- Explainability Gaps: Black-box models making it hard for clinicians to trust AI-driven advice.

- Regulatory Breaches: AI outputs conflicting with legal frameworks.

Sr. No. |

Risk |

QA Role |

| 1. | Diagnostic inaccuracies | Build reference benchmarks for model validation |

| 2. | Data privacy violations | Test compliance with encryption, anonymization standards |

| 3. | Model drift | Monitor outputs with real-time testing scripts |

| 4. | Explainability gaps | Validate interpretability features for clinicians |

| 5. | Regulatory breaches | Map test cases to HIPAA, FDA, and GDPR requirements |

These risks emphasize why QA is indispensable to the Gen AI trends in healthcare 2025 narrative. If not addressed early, such vulnerabilities can escalate into systemic failures, undermining both patient safety and organizational credibility. A structured QA approach establishes trust among clinicians, regulators, and end-users. This positions QA as a continuous safeguard rather than a reactive function.

Testing Strategies for Safe Gen AI Rollouts

Gen AI in healthcare introduces complexities that traditional QA methods alone cannot address. Models evolve with new data, outputs are probabilistic, and compliance expectations grow stricter with every release. Testing strategies must therefore be designed to anticipate variability, measure accuracy under stress, and confirm reliability at scale. A balanced approach ensures that innovation does not outpace safety.

Recommended testing approaches include:

- Synthetic Data Testing: Generating controlled datasets to assess how models perform with rare medical cases.

- Adversarial Testing: Stress-testing AI against edge cases to reveal susceptibilities.

- Performance Testing: Ensuring applications scale across large patient populations.

- Explainability Validation: Testing dashboards that communicate why AI reached certain conclusions.

- Continuous Monitoring: Deploying automated test cycles to track post-release accuracy.

Best Practices for the Use of Gen AI in Healthcare Applications

The adoption of Gen AI in healthcare applications requires precise testing frameworks that align with medical, ethical, and compliance standards. Every stage of development must be validated for reliability, accuracy, and transparency. Best practices in QA act as structured checkpoints that protect patient safety while ensuring AI systems remain clinically dependable.

The following practices create a foundation for building trust in AI-driven healthcare solutions.

- Embed QA Early: Begin testing right from dataset preparation to detect errors before they scale into models, saving time and preventing downstream inaccuracies.

- Collaborate with Clinicians: Work closely with medical practitioners to validate outcomes against real-world practices and ensure AI decisions align with clinical expertise.

- Prioritize Explainability: Design transparent dashboards and validation layers that help clinicians understand and trust why the AI system reached specific recommendations.

- Adopt CI/CD Pipelines: Synchronize QA with rapid model updates and ensure every release is tested for accuracy, scalability, and compliance before deployment.

- Monitor Ethical Risks: Continuously evaluate recommendations to prevent demographic biases or health disparities that can compromise patient care.

- Maintain Regulatory Logs: Preserve detailed audit trails of QA activities to demonstrate adherence to HIPAA, FDA, GDPR, and other compliance mandates.

ImpactQA ensures safe, scalable, and ethical rollouts with advanced test automation.

Conclusion

The expansion of Gen AI in healthcare calls for QA teams to move beyond conventional validation. In 2025, testing strategies must accommodate live-learning models, bias detection, and explainability. While the use of Gen AI in healthcare is transforming diagnostics and patient engagement, without rigorous QA adoption remains at risk of compromising clinical safety. This is where specialized partners like ImpactQA deliver value. Our healthcare testing expertise encompasses advanced areas, including EPIC software testing, which is crucial for validating electronic health records (EHRs). With EPIC systems integrating AI-driven workflows, accuracy and compliance become non-negotiable, and ImpactQA ensures interoperability, patient data integrity, and regulatory adherence at scale.

As the industry embraces Gen AI trends in healthcare 2025, QA professionals must focus on building scalable, ethical, and compliant frameworks. Those who succeed will redefine how healthcare technology supports patient outcomes.